Spatial Analytics: The Missing Layer in Drone and Robotics Performance

Spatial Analytics: The Missing Layer in Drone and Robotics Performance

Performance Data Without Context

Drones and robots generate enormous volumes of data. Flight telemetry, movement, sensor logs, system states, error codes, and success/failure flags are captured on every run. Yet teams still struggle to answer basic questions after a mission, test, or deployment:

- Why did the system behave the way it did in this environment?

- Where did performance degrade or decision-making break down?

- What actually happened in space and time?

The issue isn’t a lack of data. It’s a lack of spatial context. Without understanding behaviour in 3D space, performance analysis for drones and robotics remains incomplete.

As autonomy increases and environments become more complex, this gap widens. Teams need more than telemetry, they need a way to see behaviour as it unfolded in the real world.

This is where spatial analytics for drones and robotics becomes the missing layer.

The same spatial intelligence that powers Cognitive3D’s immersive analytics can unlock insights for autonomous systems.

The Limits of Traditional Drone and Robotics Analytics

Most drone and robotics analytics stacks focus on time-series data:

- Sensor readings over time

- System health metrics

- Task completion states

- Error events

These are essential, but they flatten behaviour into rows and 2D charts. When systems operate in physical environments, such as airspace, buildings, terrain, or dynamic scenes, this abstraction hides the most important information: how behaviour unfolded spatially.

A few common failure modes illustrate the gap:

- A drone mission completes successfully, but flight paths show unnecessary detours that increase battery drain.

- A mobile robot repeatedly fails in the same area, but logs don’t explain where or why.

- An autonomous system technically “detects” an obstacle, but reacts too late or inconsistently depending on approach angle.

In each case, the root cause lives in space, not in a table.

What this points to: traditional analytics can tell you that something happened, but not how it happened in the environment. Until spatial context is captured, teams are forced to infer behaviour instead of directly observing it.

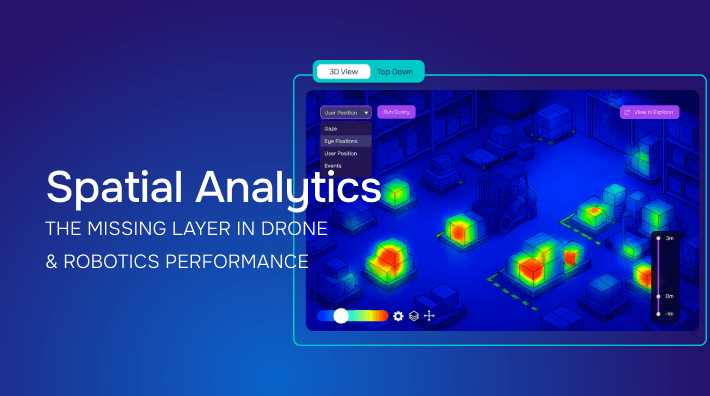

What Spatial Analytics Adds

Spatial analytics treats movement, orientation, attention, and interaction as first-class data.

Instead of asking only when something happened, spatial analytics asks:

- Where did the system move?

- What areas did it avoid, revisit, or struggle with?

- How did orientation, focus, or perception change over time?

- How did environment layout influence behaviour?

Practically, this requires three capabilities:

-

Tracking spatial behaviour - capturing position, rotation, movement paths, and interactions within an environment.

eg. A robot repeatedly oscillates before turning into a narrow corridor.

-

Exploring individual runs - replaying sessions in 3D to see exactly what happened.

eg. Scrubbing the timeline reveals hesitation when obstacle distance falls below a threshold.

-

Analyzing patterns across runs - aggregating spatial data to identify trends, hotspots, and failure zones.

eg. Across 120 runs, a specific corridor accounts for 63% of slowdowns.

Together, these capabilities turn raw telemetry into actionable insight.

Why this matters: once teams can see behaviour spatially, they stop debating interpretations of logs and start diagnosing real-world causes. This shift, from abstract metrics to observable behaviour, is what enables faster iteration, safer systems, and more reliable autonomy.

A Proven Pattern from XR Analytics

Extended reality (XR) teams face a closely related problem. In VR and AR, users move freely through 3D environments, interact with objects, and make decisions based on spatial context. Traditional analytics was never sufficient to explain what worked, what didn’t, or why.

To solve this, XR analytics evolved around a spatial-first model:

- Complete session recording of movement, orientation, inputs, and environment state

- 3D session replay to reconstruct individual experiences exactly as they occurred

- Aggregate spatial analysis such as heatmaps, focus zones, and path comparisons

This approach allows XR teams to:

- Diagnose failures by replaying real sessions instead of guessing

- Compare successful and unsuccessful behaviours spatially

- Identify environment design issues that block performance

- Validate improvements by comparing behaviour across versions

The key insight: behaviour is much better understood when you can see it in space.

Why this is important beyond XR: This is no longer a theoretical model. It is operational infrastructure for teams that depend on 3D performance insight.

That same analytical pattern applies directly to drones, robots, and autonomous systems operating in the physical world.

Applying the XR Spatial Analytics Pattern to Drones and Robots

While the hardware and use cases differ, the analytical pattern is the same. Systems operating in 3D environments require analytics that preserve spatial truth.

1. Track Behaviour in Space

For drones and robots, this means capturing:

- Movement paths and trajectories

- Orientation and heading changes

- Proximity to obstacles or zones

- Interaction points with the environment

Without this layer, teams are left inferring spatial behaviour from indirect signals.

Expanded insight: spatial tracking reveals inefficiencies, hesitation, and risk that never appear in success metrics alone. It shows how the system navigated reality, not just whether it reached an endpoint.

2. Replay Individual Runs

Replay transforms debugging and review. Instead of interpreting logs, teams can:

- Reconstruct missions in 3D

- Scrub timelines to see where decisions changed

- Observe hesitation, oscillation, or avoidance behaviour

Replay and find answers to the question “what actually happened?” far faster than relying on dashboards alone.

Expanded insight: replay creates a shared understanding across engineering, operations, and safety teams. Everyone sees the same evidence, reducing guesswork and accelerating alignment.

3. Analyze Spatial Patterns Across Runs

Once spatial data is aggregated, new insights emerge:

- Areas where systems consistently slow down or fail

- Environmental features that trigger errors

- Differences between human-operated and autonomous behaviour

- Performance changes across software versions or training updates

These insights are difficult, and often impossible, to extract from non-spatial analytics.

Expanded insight: pattern analysis turns isolated incidents into systemic understanding, helping teams prioritize fixes that have the greatest real-world impact.

Why This Matters for Performance, Safety, and Autonomy

As drones and robots move toward greater autonomy, the cost of misunderstanding behaviour increases.

Spatial analytics supports:

- Performance optimization – reducing inefficiencies hidden in flight paths or navigation behaviour

- Safety analysis – identifying risky zones and near-miss patterns

- Training validation – comparing novice vs expert behaviour spatially

- Autonomy development – understanding how systems actually behave in real environments

Crucially, this shifts analysis from outcome-based (“did it work?”) to behaviour-based (“why did it work or fail?”).

Long-term implication: teams that understand behaviour spatially can iterate with confidence. Those that rely solely on abstract metrics risk shipping systems they cannot fully explain or trust.

Spatial Analytics Is Becoming Foundational

XR teams already treat spatial analytics as non-optional. Without it, they cannot confidently improve training, usability, or performance.

Drones, robotics, and autonomous systems are reaching the same inflection point.

As environments become more complex and autonomy increases, understanding behaviour in space is no longer a nice-to-have, it’s foundational.

XR analytics provides a clear reference model for what this looks like in practice: track behaviour, replay what occurred, and analyze patterns in space.

Teams building autonomous systems now face the same inflection point XR teams faced years ago: continue relying on partial abstractions, or adopt analytics that preserve spatial truth.

A Proven Spatial Analytics Model from XR

XR teams already rely on spatial analytics to prove what works, diagnose failure, and drive measurable outcomes.

Cognitive3D was built around this model from the ground up: tracking behaviour, replaying real sessions, and analyzing spatial patterns to support confident decision-making.

Our spatial analytics model was built to preserve behavioural truth in 3D environments; a model now directly applicable to autonomous systems.

The same analytical principles found in XR now apply wherever behaviour unfolds in 3D space.

What’s Next

In the next article in this series, we’ll explore how drone companies use spatial data to improve autonomy, safety, and training, and why replay and spatial comparison are becoming essential tools for teams working with real-world autonomous systems.