The Team Behind the Metrics, the Models, and the Evidence

Your Data Answers Harder Questions Than Your Dashboard Can Ask

Cognitive3D captures everything that happens inside XR — movement, attention, decisions, and performance. The platform makes that data visible. The data science team is where it becomes evidence.

We design the composite scores and the platform reports. We build custom analyses that answer the questions clients bring to us. And we work directly with customers to connect spatial behaviour data to the outcomes that matter to their business.

Two PhDs in Human Spatial Behaviour

One focus: making XR evidence measurable.

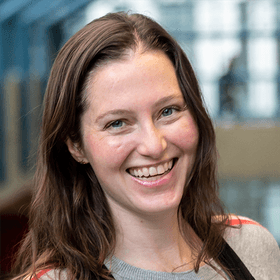

Dr. Nicola Anderson

Head of Data Science

Eye–head coordination & visual attention. Postdoctoral work at UBC studying gaze dynamics in immersive environments. Leads custom analytics engagements, directs the team's research programme, and makes spatial behaviour data accessible to client teams.

Dr. Mona Zhu

Data Scientist

Master of Data Science (UBC). MA & PhD in Cognitive Psychology (Waterloo). Expertise in spatial cognition, human factors, and perceptual processes in 3D environments. Designs and maintains the platform's composite scores, develops data pipelines, and supports custom client analytics.

Four Capabilities. One Goal: Turn Spatial Data Into Decisions.

Platform Metrics

Composite scores — Cyberwellness, Ergonomics, Presence, App Performance — grounded in spatial behaviour research. Interpretable sub-components, not black boxes.

Custom Analytics & Reporting

Bespoke analysis scoped to your questions. A/B testing, retention modelling, spatial heatmaps, KPI tracking, training effectiveness.

Benchmarking & Standards

Cross-application benchmarks from hundreds of millions of minutes of XR data. Comfort budgets, performance baselines, quality thresholds.

Integration Strategy

Custom deployment guides scaled to your needs — from lightweight consumer app instrumentation to enterprise-wide rollout playbooks.

We Design the Scores the Platform Reports

Cyberwellness

Quantifies the comfort profile of the experience. Metrics grounded in cutting-edge peer-reviewed research on what induces VR sickness.

Ergonomics

Evaluates physical strain from headset orientation and controller positioning. Horizontal, forward, and vertical reach. Roll and pitch strain.

Presence

Quantifies immersion. Sub-components include spatial coverage, controller movement, gaze exploration, and user immersion.

App Performance

Benchmarked performance, degree of impact, consistency, and fluctuation. Overall scores use weighted averages and geometric means.

You Have a Question About Your XR Experience. We Answer It With Data.

A/B Testing

Onboarding variants, UI alternatives, interaction paradigms with measurable outcomes.

Retention Modelling

Churn prediction and lifecycle analysis across user lifecycle stages.

Spatial Heatmap Analysis

3D navigation patterns, attention hotspots, and spatial behaviour mapping.

KPI & OKR Tracking

Ongoing measurement against the metrics that matter to your business.

Training Effectiveness

Learning outcomes, skill transfer, and performance improvement in XR training.

Quality Is Not a Spectrum. It Is a Threshold.

The data science team maintains cross-application benchmarks built from hundreds of millions of minutes of XR behaviour data. These benchmarks provide context that no single application can generate on its own: what comfort profiles look like across app categories, where performance thresholds sit for different devices, and what “good” looks like for ergonomics, presence, and session quality.

For enterprise customers, these benchmarks have operational consequences. Quality is not a spectrum in enterprise; it is a threshold. Teams above it succeed. Teams below it lose the deployment.

Every Deployment Is Different. Your Strategy Should Be Too.

Consumer & Independent Apps

- Focused instrumentation guide

- Event taxonomy & SDK configuration

- Key engagement & retention metrics

- Optimised for quick integration

- Lightweight & self-serve

Enterprise Deployments

- Full-stack analytics with custom instrumentation

- Bespoke data models & pipeline design

- Advanced spatial & behavioural analytics

- Dedicated DS team embedded in your workflow

- Scales with your programme & evolving questions

Three Patterns From Analysing Hundreds of Millions of Minutes of Spatial Data

The following case studies are drawn from real client engagements. Details have been anonymised, but the patterns and outcomes are representative of the work the data science team delivers.

The Onboarding That Predicted Retention

A VR fitness application tested three onboarding variants. The action-oriented variant won across every metric: longer first sessions, lower quit rate, faster return, higher frequency through sessions two and three.

First-session experience is a surprisingly strong predictor of long-term retention. The first two to five minutes carry disproportionate weight.

The Comfort Ceiling That Cut Off Monetisation

A fast-paced action game had strong mechanics but 75% of sessions were under five minutes. The comfort ceiling was cutting off monetisation before users reached the conversion window.

The Quality Gap That Predicted Adoption

A large-scale enterprise training deployment spanned multiple VR applications built by different developers. Adoption rates varied from 3% to 90%. Apps with better performance and comfort saw dramatically higher adoption.

Engagements Start Light and Grow as Questions Get Harder

Integration Strategy

What to track, how to configure the SDK, and which metrics matter. From focused consumer instrumentation to full enterprise deployment playbooks.

Project-Based Analysis

Scoped investigations with clear deliverables. A/B tests, comfort audits, benchmarking reports, user journey analysis. Question in, recommendation out.

Ongoing Embedded Support

Regular reporting, KPI tracking, and iterative analysis as your product evolves. Reporting cadences, tracking dashboards, and insights over time.

Let's Find Out What Your Data Is Telling You

Whether you need a one-time analysis or ongoing data science support, the team is ready.