Cognitive3D in 2022: Introducing the Consumer Closed Beta

2021 was a breakthrough year for XR as businesses realized the consequences of COVID are here to stay, at least for the short term. XR has played a bigger role in forming meaningful social connections between others for work and play.

These conditions have opened up acceptance for hybrid and remote working situations. As well rethinking the ways things used to be done and how to change them moving forward. Throughout 2021 we have seen a continuation of the use of XR across many different verticals.

Facebook's rebrand to Meta was a milestone event for the immersive technology industry in 2021. This event introduced the concepts of the “metaverse” to mainstream consciousness. The term “metaverse” became the buzzword of the year. For many people, it was the first time they were confronted with thinking about these ideas and its implications on our society.

It’s been exciting and overwhelming to see how much the metaverse has seeped into the societal consciousness. For example, Meta had the most popular app in Apple’s App Store on Christmas Day: the Oculus virtual reality app.

We have seen the signs leading up to this XR adoption inflection point for years. The technology has gotten more advanced, costs have gone down and the tools are more accessible. More and more companies understand the benefits of distributed learning and experiential experiences.

New beginnings in 2022

2021 was a big year for us at Cognitive3D, we developed new partnerships and enabled a number of enterprises to measure and scale their XR applications. We also had the opportunity to attend our first live event in 2+ years at AWE 2021 in Santa Clara.

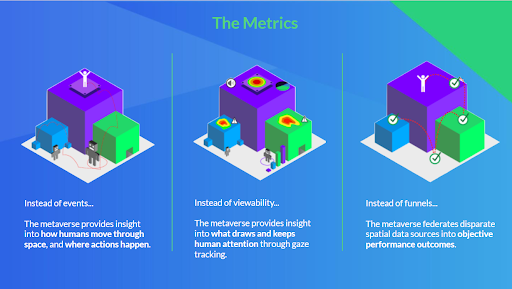

We met many interesting people and we learned through our conversations that there was a lot of demand for a spatial analytics solution. Many of the current tools are not native to the 3D simulations, instead they take traditional concepts of analytics and try to fit them into the metaverse.

This doesn't work because user participation within the metaverse is completely different from traditional platforms. Users have agency to contribute to the experience rather than act as passive observers.

We decided to go back to our roots. Cognitive3D was originally launched in 2016 for games and entertainment, but we quickly pivoted to enterprise to keep the business going because the market wasn't ready at that time.

At this point of the adoption curve, we feel there is now enough of a market to support consumer-based applications again.

We want to provide new creators, entrepreneurs and others with the proper tools to extract insights from the metaverse. There is nothing on the market that can fulfill these needs so we decided to open up our platform to help them measure the metaverse.

Ultimately we plan on launching a freemium model with a very-generous free tier in 2022, and look to monetize breakout customers or folks that need advanced capabilities.

To do that, we want to ensure that the platform is battle tested for games and entertainment customers. While we have faced scale, we don't know what we don't know and are looking for some closed beta consumer apps to help us learn.

Interested in getting involved?

We are looking for consumer facing VR game or application that is launched on Oculus Store, Viveport, Steam, or a combination of any/all of these.

- A 3D-based experience with six degrees of freedom built in Unity or Unreal

- Access to source code, with ability to implement a 2MB SDK.

- We are not supporting eye tracking or biometrics for this closed beta.

What we need from you:

- Implement the SDK within your application. We are willing to support you with this process over Zoom to keep this as short as possible.

- Work with Cognitive3D to ensure your Privacy Policy is in-line with our requirements.

- Once tested and working, ship the analytics capability in your next build.

- Use the product, make suggestions on how it could be improved for your needs. Report any bugs, issues or concerns.

What features do you get?

- Full access to Cognitive3D with an unlimited amount of minutes, sessions and participants for a period of one year. Which includes:

- SceneExplorer: exact replay of exactly how a user interacted in their session. Rendered in WebGL with spatial data and timeline.

- ObjectExplorer: aggregate metrics of how users interacted with all 3D objects represented in sessions.

- Scene Viewer: aggregate spatial data such as gaze, fixation, position and events. Supports real-time filtering.

- Analysis Tool: powerful event query system that considers spatial data and context

- Objectives: measurement of sequential and non-sequential multi-step behaviors present within sessions

- Dedicated and named customer support engineer, with access to Slack or Intercom

- A committed partner looking to help solve problems and optimize experiences in immersive applications.

How do I get started?

Drop an email to beta@cognitive3d.com or contact us through our website to apply.